So, what is the difference between 720p and 1080p? What about 1080i vs 1080p?

This is one of the problems with home theater technology. There is a seemingly endless list of acronyms, numbers and technical terms to understand.

For instance, many people buy 1080p televisions without really understanding what it means.

And the resolution of high-definition images is an area where people get very confused.

They don’t realize that understanding these terms a little can help them get the best from their new TVs.

But don’t despair; it’s a bit like riding a bike while balancing a fish on your head. It’s not that difficult once you get the hang of it. 🙂

Read on to understand some of the basic terms.

What Is the 1080p Video Format?

1080p refers to the resolution of an image that is sent to your TV.

A 1080p resolution can also be called ‘Full HD’.

It is also often used to describe a 1080p television that has a widescreen 1920 x 1080 native resolution. However, the true meaning of 1080p refers to the resolution of the image rather than a TV resolution.

Go here for an article on the difference between image and TV resolution.

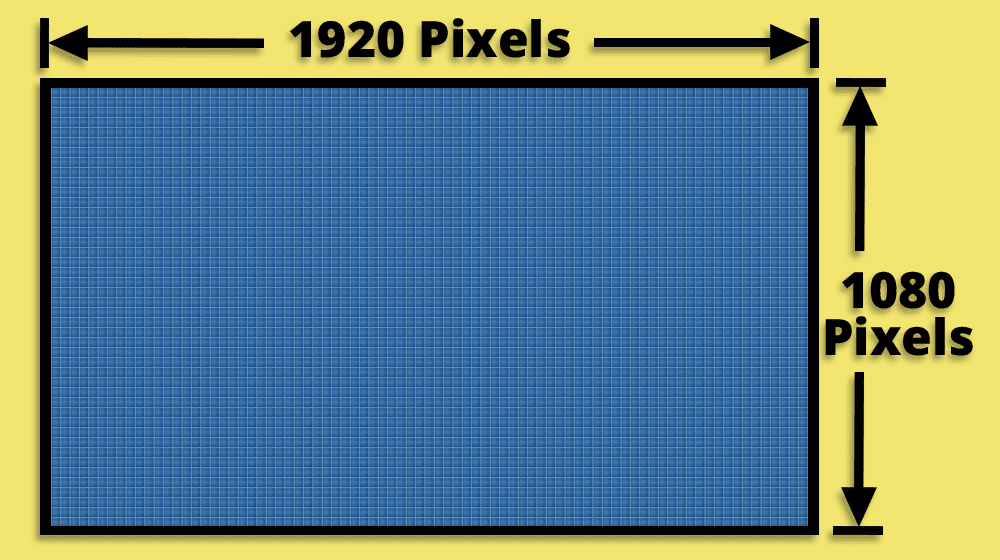

A 1080p image is a high-definition picture that is created with a 1920 x 1080 resolution.

This means that one frame of the picture has a widescreen aspect ratio.

This has a resolution of 1,920 pixels of information across (horizontal), and 1,080 pixels of information down (vertical). Therefore, in total, it has over 2 million pixels of detail.

The ‘p’ at the end of 1080p is also important.

It means that the image has been recorded using progressive scan. This is a better way of creating images than interlaced scan – which is the traditional way of transmitting television images.

Progressive scan produces a better-quality image. This is because the picture is created by drawing each frame of the image in one pass down the screen. From line one to line 1080.

This produces a very stable and clear picture.

Progressive scan is also the way that flat-screen televisions draw an image, which is another reason why a progressive scan image is better for this type of television.

The most common source of a true 1080p image is from a Blu-ray player and some PlayStation/Xbox games.

What Is the 1080i Video Format?

A 1080i image is still regarded as high-definition.

As you may be able to guess from the number, the resolution of a 1080i image is the same as 1080p, and it is also a widescreen aspect ratio.

It has the same resolution in pixels as 1080p – so each frame has a horizontal resolution of 1,920 pixels and a vertical resolution of 1080 pixels. Therefore, it can also be called ‘Full HD’.

The big difference is in the letter at the end – the ‘i’.

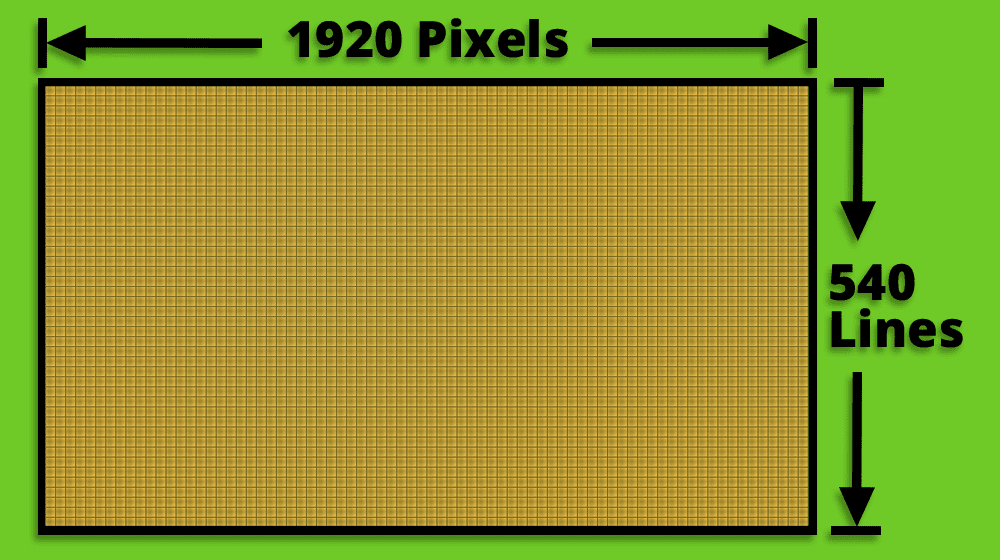

This means that a 1080i image is transmitted as an interlaced scan picture, which means each frame of 1080 lines is actually drawn in two passes.

First, all the odd lines are drawn – from 1 to 1079. Then all the even lines are drawn – from 2 to 1080.

Each of these passes is known as a field – and so two fields (of 540 lines each) make up one frame of 1080 lines.

Therefore, with an interlaced image, it takes slightly longer for your eyes to see a complete frame when compared to progressive scan.

So although a TV does this drawing process pretty quickly (50 or 60 times per second depending on where you live in the world), your eyes can often detect this as a slight flicker or unstable image.

This type of image resolution is commonly used by TV companies when they transmit an ‘HD’ image – and they use this method because it takes less bandwidth to transmit an interlaced image than a progressive one.

What Is the 720p Video Format?

The third type of high-definition image is known as 720p.

It has fewer pixels compared to a 1080p/1080i image – but is still classed as high-definition and has a high level of detail.

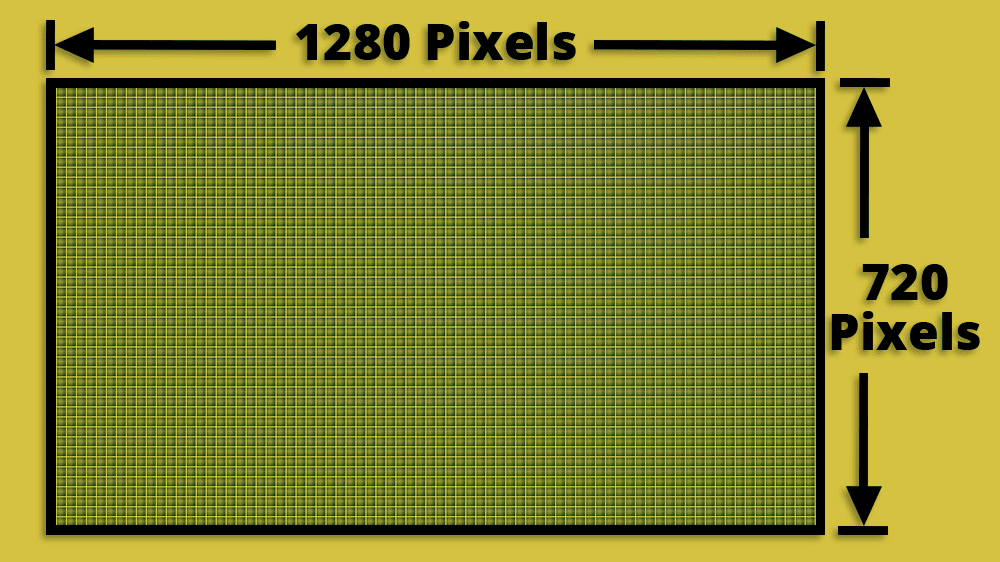

It has a widescreen aspect ratio with a horizontal resolution of 1280 pixels and a vertical resolution of 720 pixels.

Therefore in total, it has 921,600 pixels of information, compared to over two million pixels with 1080i and 1080p images.

As with 1080p, the ‘p’ at the end indicates it is a progressive scan image that is drawn in one pass down the screen.

Like a 1080i image, this resolution is commonly used by TV companies to transmit an ‘HD’ picture.

It has less pixel information than a 1080i image and so requires less bandwidth to send – but it is transmitted in progressive scan which is an advantage over an interlaced image.

Which Is the Best Resolution to Use on Your TV?

There are a wide range of input devices you can use with your flat-screen TV – Blu-ray players, DVD recorders, cable TV boxes and various forms of digital TV receivers.

The problem is many of these devices often offer a range of output resolutions, so it can be confusing to know which one to choose.

The best way to approach this issue is to always try to match the resolution of your input signal to the native resolution of your TV.

By doing this you are minimizing the amount of processing the TV has to perform – and so in theory you should get the best possible image for the equipment that you have.

However, just to confuse you, there are also circumstances when you may not want to do this.

Here is a comparison of the common high-definition TV resolutions to help you decide which to use with your flat-screen TV.

1080i vs 1080p

So what do you go for when you have a choice of 1080i vs 1080p?

Well, in this instance, it is always better to go for 1080p.

Your HDTV is a progressive scan device, so it is better to send it a progressive image. If you send an interlaced image, then the TV will have to de-interlace the picture first before it can show it – and this can affect the picture quality.

A native progressive scan picture should appear sharper and flicker-free compared to an interlaced image.

This is true whether you are sending the image to a 1080p television or a 720p native resolution screen.

720p vs 1080p

This is harder to call, and if you are sitting more than 6 or 7 feet away from your screen, then many people will struggle to tell the difference regardless of the resolution you use.

Obviously, 1080p has a higher resolution than 720p, and so in most circumstances, you would choose this – and you would always choose this resolution for 1080p TVs.

However, bearing in mind our guideline of matching the image resolution and the native resolution, it could be a slight advantage to send a 720p image if you had a 720p (or 768p) screen. This would ensure that the TV doesn’t have much scaling to do before showing the image.

However, the results of this can vary between models of television and could also depend on the quality of the image coming from the source device – so the best idea may be to test both.

If you switch the output of your source between 720p and 1080p, you may notice a difference in picture quality on your 720p TV – or you may not!

If there is no noticeable difference – then who cares which one you choose?

720p vs 1080i

This is an interesting one.

With 720p, you have the choice of a lower resolution image (worse) with progressive scan (better) – and with 1080i, you have a higher resolution image (better) with interlaced scan (worse).

In theory, both of these have an advantage over the other – and also a disadvantage.

The one you choose may just come down to testing it on your equipment and seeing if you can see a difference.

As already mentioned, as a starting point, try matching the image and native resolution.

Therefore use 720p for a 720p/768p TV and 1080i for a 1080p TV.

However, you may find that the stable progressive scan 720p image will look slightly better even on the 1080p screen – as the TV doesn’t have to de-interlace the image.

The best results may well come down to the quality of the equipment you are using and how well the images are scaled and de-interlaced.

Let your eyes be the judge.

Conclusion

Initially, it can seem confusing when you see all these technical terms thrown about – and it is something that you see a great deal of when looking to buy a new LED or OLED TV.

However, once you look into the details, you can see that there isn’t much to be scared of.

When it comes down to deciding which resolution to send your TV (assuming you have a choice), then there isn’t always a completely black-and-white answer – there isn’t always a ‘right’ choice.

However, if you are prepared to do a little bit of experimenting – and learn to trust your eyes – then you will get the best results from our equipment.

It’s a waste to buy a 1080p television if you don’t understand how to get the best picture that you can on the screen.

About The Author

Paul started the Home Cinema Guide to help less-experienced users get the most out of today's audio-visual technology. He has been a sound, lighting and audio-visual engineer for around 20 years. At home, he has spent more time than is probably healthy installing, configuring, testing, de-rigging, fixing, tweaking, re-installing again (and sometimes using) various pieces of hi-fi and home cinema equipment. You can find out more here.